Facebook Ads Split Testing: Part 2

If you’ve read my last blog post then no doubt you will be well aware of the issues faced when A/B testing Facebook ads (if you haven’t then make sure you read it here first). Well fortunately Facebook have pulled their fingers out with a new whitelist feature that solves some of the issues I raised (if you don’t have this feature yet then you really do need to read my last blog post for some workarounds!). In this post we will look at the new split test feature in a little more detail to find out what’s improved, and look at some issues that remain unsolved.

The Issue with Split Testing in Facebook

Although Facebook have got audience split testing down to a tee, the major issue I identified in my last blog post is the fact that you will experience audience overlap when split testing variables such as bid types and placement (you will need to target the same audience when testing such factors). This means that your ads are competing with each other, and your audience is likely to see the same ad which is being tested in each ad set (it’s a fair assumption that a user is more likely to react to the first ad they see than the second).

This new update means that when you create a split test within your campaign, Facebook will split this campaign into two or more different ad sets to be tested against each other. This may sound very familiar to the manual approach of split testing which I mentioned previously, yet the major difference is that your ‘potential reach will be randomised and split between advert sets to ensure an accurate test’. If you manually create two ad sets to be tested against one another then these ad sets would be competing against each other. Fact.

Facebook’s New Split-Test Feature

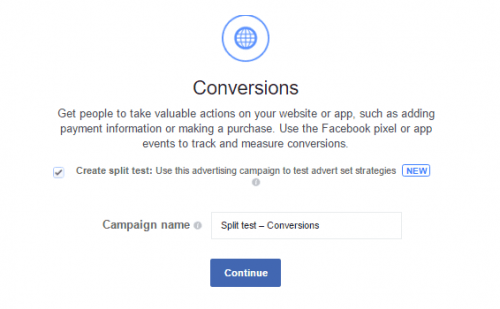

It is pretty easy to create your own split test in Facebook Ads Manager, just select ‘Create Split Test’ when you create your campaign;

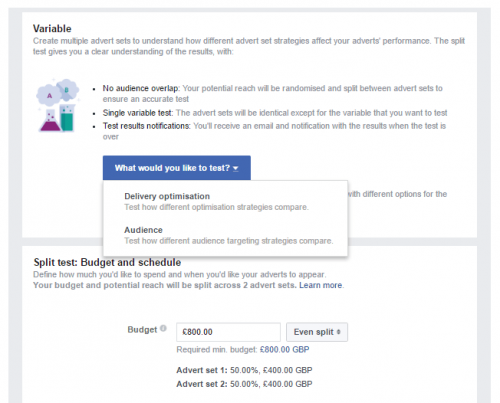

The stage will take you to the Ad Set settings screen, which should look familiar enough with the exception of one new option;

This option allows you to test variations of either your bid settings or targeting, and assign a budget weighting to each variable.

Enter your options for both variables, create the creative as you normally would, then launch!

When the test is finalised, you will receive an email from Facebook to inform you as to which ad set has come out trumps.

Limitations

Whilst this is a long-overdue feature, I cannot help but think that many issues of Facebook split testing remain unsolved. Without a doubt the biggest win for me is the fact that this feature prevents audience overlap from 2 different ad sets which are targeting the same audience. I will certainly be using this to test different Max CPC bids and different bid types against the same audience to achieve this, but I can’t help but think that the other option for split testing (Audience) is somewhat redundant because there was never any issue with split testing audiences in Facebook anyway. If you are testing 2 different audiences then there is no need for Facebook to ensure there is no audience overlap, because you are testing two different audiences anyway. Granted some users may fit within both audiences that you are testing, but you can exclude audiences within the ad set settings to ensure that this doesn’t happen!

That’s not my only bone to pick to with this new feature. If you manage Facebook ads for your business then you will no doubt know that ad performance can vary greatly depending on the placement options that you select. As a matter of fact, I always separate my ad sets depending on the ad placement/device but this does not prevent audience overlap (where the same user can see the same ad but on both desktop and mobile devices). This isn’t always a bad thing because we know that the user path to conversion often takes place across multiple devices, but it really wouldn’t be hard for Facebook to add placement as a variable option within this feature.

Furthermore, this new feature is extremely limiting. Facebook do not allow you to make any other variables apart from the variables being tested. This makes sense because if you are A/B testing, then all variables need to remain equal except for the variable being tested. Fair enough.

However, like many digital marketing agencies, Google Analytics is the hub where we report on all conversions generated. I get that Facebook reports on conversions, but this is inadequate if you are working on multi-channel campaigns because of the different attribution models being used. It is best to stick with one analytics platform of choice otherwise your conversion data just will not add up. In order to attribute Facebook conversions within Google Analytics, I insert a piece of code called a UTM tag in to each ad, and this tells Google which campaign my ad clicks came from. Unfortunately, I cannot assign different UTM tags to the ads being tested, because Facebook needs both ads to be identical in order for it to be a split test. This is very irritating because the user can’t view the UTM tag that I have hidden in the ad link, and therefore it will not affect the outcome.

There’s more. Remember in my last post I discussed the issues faced when split testing ad creative at either an ad and an ad-set level? (I did tell you to read that post didn’t I?!). Well nothing’s changed there. Nada.

In summary

Don’t get me wrong, I think that it is great that Facebook have recognised that they need to improve the A/B testing experience, but it’s unfortunate that it hasn’t been implemented very well. I think this approach is great for users who may be new to online advertising because much of this process is completely automated. Facebook will even email you to inform you of the winner. However, there are many issues present which just don’t cut it for me. I’m more than happy to manually create my own split tests and analyse the data myself, I just wish that all ad sets within a campaign that share the same audience have their reach randomised and split between all ad sets so that there is never any overlap in the first place. I don’t think that this will be too difficult for Facebook to implement seeing as this feature is available within the new split test feature, but the new feature just doesn’t give me the level of control over my campaigns that I’m used to when manually split testing.

Mr Zuckerberg, if you are reading this, then please take heed!

Follow my contributions to the blog to find out more about Paid Media for eCommerce businesses or sign up to the ThoughtShift Guest List, our monthly email, to keep up-to-date on all our blog posts, guides and events.